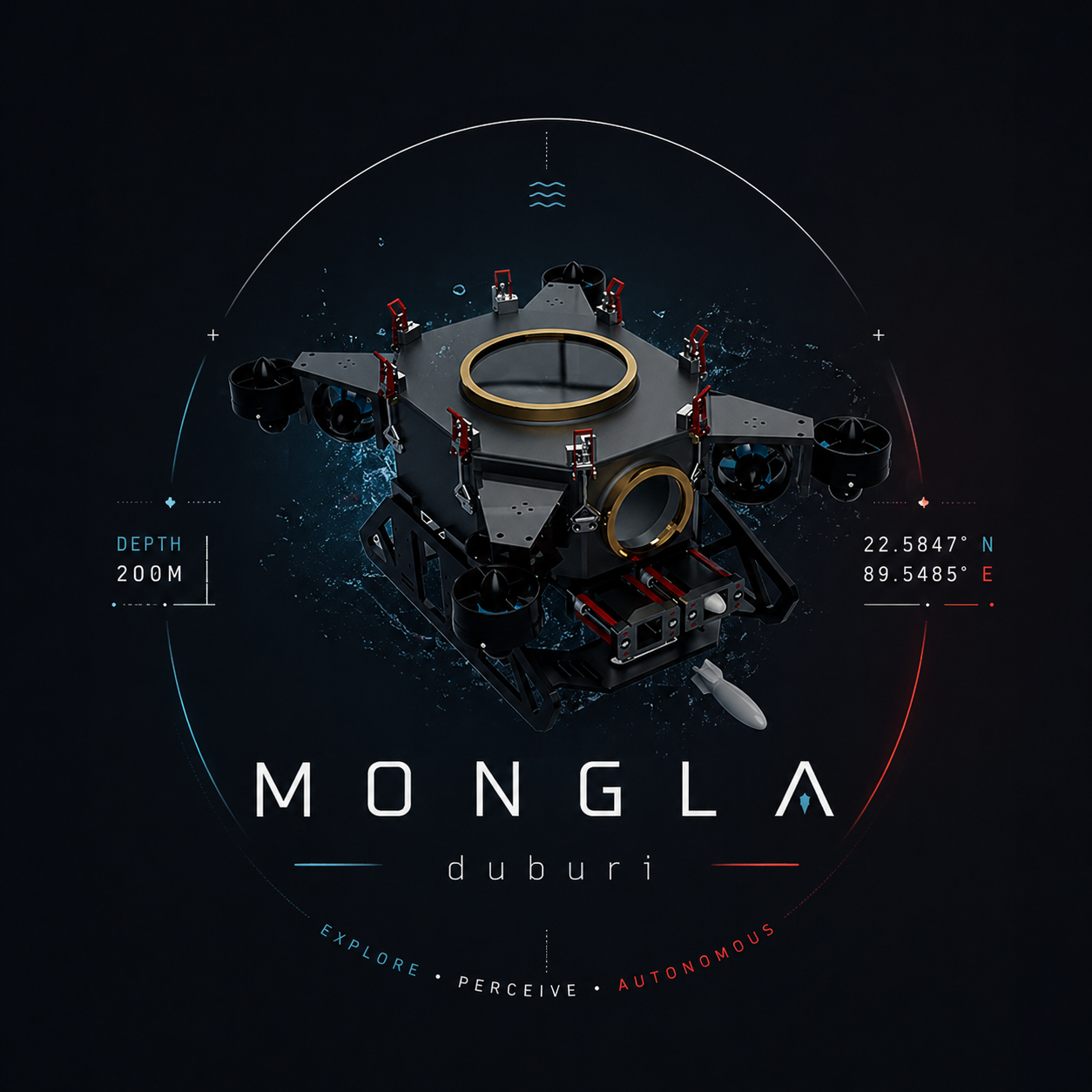

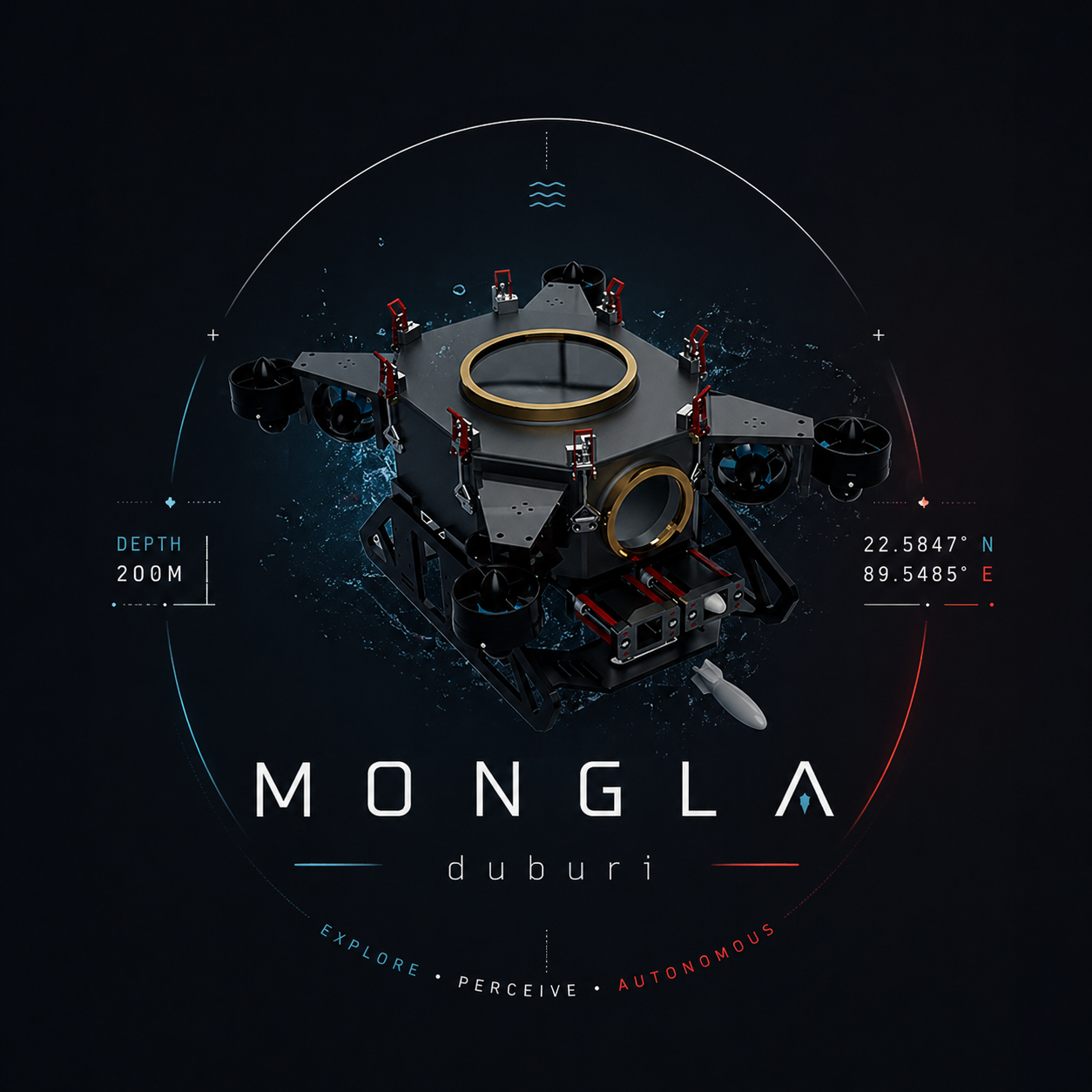

Sundarbans Delta, Bangladesh

Named for the port of Mongla

Octagonal Marine 5083 aluminum hull, 8× Blue Robotics T200 thrusters in vectored_6dof configuration — same ArduSub frame as BlueROV2 Heavy.

| Component | Spec |

|---|---|

| Hull | Octagonal Marine 5083 aluminum, in-house |

| Frame | vectored_6dof (8× T200) — same as BlueROV2 Heavy |

| Flight controller | Pixhawk 2.4.8 · ArduSub 4.x · EKF3 |

| Companion SBC | Raspberry Pi 4B · BlueOS · MAVLink router |

| Mission SBC | Nvidia Jetson Orin Nano · all ROS2 nodes |

| Depth sensor | Bar30 (ArduSub AHRS2 altitude) |

| IMU | BNO085 on ESP32-C3 · USB CDC · gyro+accel |

| DVL | Nortek Nucleus1000 · 192.168.2.201 · TCP 9000 |

| Cameras | Blue Robotics Low-Light HD USB (fwd + down) |

| Network | 5-port onboard switch · FathomX PoE tether |

| Payload | Slingshot torpedo · aluminum grabber · solenoid dropper |

Five ROS2 packages, one action surface (/duburi/move), one MAVLink owner. Every command flows through the same registry — CLI, mission runner, and Python API alike.

Mongla uses three control modes depending on the axis. ArduSub owns the 400 Hz inner loops; Python shapes setpoints at 5–20 Hz.

Python sends RC_CHANNELS_OVERRIDE with a thrust percentage for a set duration. With smooth_translate:=true, a trapezoid_ramp shapes the envelope — easing in, cruise, easing out. The ease-out IS the brake. After: 200 ms reverse kick (constant mode) + 1.2 s settle.

Python streams setpoints — SET_ATTITUDE_TARGET for yaw at 10–20 Hz, SET_POSITION_TARGET_GLOBAL_INT for depth at 5 Hz. ArduSub's 400 Hz attitude and position PIDs close the actual loops. Python never fights the firmware.

A Python daemon thread reads yaw_source, computes proportional error, and streams Ch4 yaw-rate overrides at 20 Hz. Translation commands run on top — they only write Ch5/Ch6, leaving Ch4 to the lock. Source-agnostic: AHRS, BNO085, or DVL heading.

YOLO v26 runs on the Jetson GPU at ~30 Hz. ByteTrack assigns stable IDs across frames. VisionState bridges detections to control commands.

Bounding box geometry drives three independent control channels simultaneously. The camera frame is a normalized coordinate system — errors feed directly into P-gains.

| Verb | Active axes | Settling condition |

|---|---|---|

| vision_align_yaw | Ch4 only | |cx_err| < deadband for N frames |

| vision_align_lat | Ch6 only | |cx_err| < deadband |

| vision_align_depth | depth setpoint | |cy_err| < deadband |

| vision_hold_distance | Ch5 | bbox_h_frac ≈ target ± tol |

| vision_align_3d | yaw+fwd+depth | all axes settled simultaneously |

ros2 param set /duburi_manager vision.kp_yaw 80.0ros2 param set /duburi_manager vision.deadband 0.06

--tracking true, VisionState reads from /tracks instead of /detections. ByteTrack+Kalman bbox is smoother and has stable IDs — better for slow-moving targets, occlusion, and low-confidence frames. Raw detections have lower latency (no tracking buffer).

Three sensor paths, one clean interface. YawSource is an ABC — swap the backend with one ROS param. EKF3 on Pixhawk handles IMU + mag + baro fusion at 400 Hz.

All subsystems working together: perception → state → control → actuation → sensing, in one closed loop at multiple timescales.

RoboSub is an international student competition for fully autonomous underwater vehicles. Tasks test perception, navigation, manipulation, and mission management — the exact capabilities Mongla is built for.

set_depth -0.8), engage heading lock toward gate bearingvision_align_yaw centres it horizontallyvision_align_lat or lateral offset moves AUV to correct side of centremove_forward through gate with heading lock — DVL confirms passage distancemove_lateral to correct offset, heading lock maintains forward directionvision_align_3d centres AUV horizontally over correct half using downward cameravision_align_3d --axes yaw,depth aligns torpedo tube with target openingvision_hold_distance holds correct standoff for torpedo trajectoryvision_align_yaw faces itvision_align_3d to collect floating trash objectsA complete RoboSub run as a Mongla mission script. One YAML-like DSL drives all subsystems in sequence.

# missions/robosub_prequal.py def run(duburi, log): duburi.arm() duburi.set_depth(-0.8) duburi.lock_heading(target=0, timeout=180) # ── GATE ────────────────────────────── log("approaching gate") duburi.vision.find(camera='laptop', target='gate', sweep='yaw_right') duburi.vision.yaw(target='gate', duration=10, camera='laptop') duburi.move_forward_dist(distance_m=4.0, gain=60) # ── BIN ─────────────────────────────── log("finding bin") duburi.set_depth(-1.5) duburi.vision.lock(axes='yaw,forward,depth', target='bin', camera='downward', duration=12) duburi.drop() # solenoid dropper # ── TORPEDO ─────────────────────────── duburi.set_depth(-0.8) duburi.vision.lock(axes='yaw,depth', target='torpedo_board', distance=0.4, duration=15) duburi.fire_torpedo(1) duburi.unlock_heading() duburi.disarm()

missions/your_name.py exposing def run(duburi, log), rebuild duburi_planner, and it appears in ros2 run duburi_planner mission --list instantly. No registry edit needed.

Drag the target inside the camera frame or use the sliders to see how bounding-box errors map to real RC override values. All maths match the live codebase exactly.

Only Ch4 driven. AUV yaws until cx_err < deadband. Heading lock suspends during this — vision becomes the yaw authority for the duration.

Target bbox_h_frac maps to physical distance. Ch5 drives proportionally to dist_err. Approach if bbox too small; back off if too large.

Yaw + forward + depth errors computed every 50 ms from the same detection. Each axis has its own deadband and gain — settle condition is all three simultaneously within threshold.

ArduSub SITL + Gazebo Harmonic gives a faithful vectored_6dof 8-thruster sandbox. Mongla runs identically against sim or real hardware — only the connection profile changes.

sim_vehicle.py \

-L RATBeach \

-v ArduSub \

-f vectored_6dof \

--model=JSON \

--out=udp:0.0.0.0:14550 \

--out=udp:127.0.0.1:14551 \

--console

gz sim -v 3 -r \ bluerov2_underwater.world # GZ_SIM_RESOURCE_PATH must # include bluerov2_gz/models # and bluerov2_gz/worlds

ros2 run duburi_manager start duburi arm duburi set_depth --target -0.5 duburi move_forward \ --duration 5 --gain 60 duburi disarm

vectored_6dof 8-thruster ArduSub frame as Duburi 4.2. Mass, hull shape, and payload differ — but all motion verbs, MAVLink messages, and sensor paths work identically. Develop in sim, deploy at pool without code changes.

Mongla is built on well-established robotics, control theory, and computer vision foundations. These are the primary sources for the concepts used.

Proportional-Integral-Derivative control — the foundation of depth and heading stabilisation. ArduSub implements cascaded PID (angle → rate) for attitude and altitude.

Extended Kalman Filter fuses IMU, magnetometer, barometer, and (optionally) DVL to produce the vehicle's pose estimate. ArduSub EKF3 runs at 400 Hz onboard Pixhawk.

You Only Look Once — single-pass CNN for real-time detection. YOLO v26 on Jetson GPU delivers ~30 Hz detection of gates, buoys, bins, and other RoboSub objects.

ByteTrack associates every detection (not just high-confidence ones) to tracks, bridging occlusion gaps. Per-track Kalman smooths bounding box jitter for stable vision control.

Doppler Velocity Log measures velocity relative to seabed via acoustic Doppler shift. Integrating v(t) over time gives position — enabling GPS-denied closed-loop distance moves.

Robot Operating System 2 (Humble) provides pub/sub, actions, and parameters. MAVLink is a lightweight binary protocol used to command ArduSub and receive telemetry.